Milgram

Milgram is intended for use on projects that may involve sensitive data. This applies to both storage and computation. If you have any questions about this policy, please contact us.

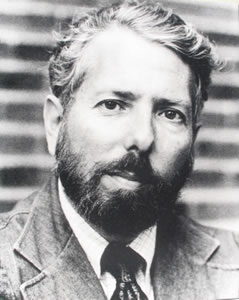

Milgram is named for Dr. Stanley Milgram, a psychologist who researched the behavioral motivations behind social awareness in individuals and obedience to authority figures. He conducted several famous experiments during his professorship at Yale University including the lost-letter experiment, the small-world experiment, and the Milgram experiment.

Milgram Usage Policies

Users wishing to use Milgram must agree to the following:

- All Milgram users must have fulfilled and be current with Yale's HIPAA training requirement.

- Since Milgram's resources are limited, we ask that you only use Milgram for work on and storage of sensitive data, and that you do your other high performance computing on our other clusters.

NIH Controlled-Access Data and Repositories

Effective January 25, 2025, new or renewed Data Use Certifications for NIH Controlled-Access Data and Repositories must adhere to the NIH Security Best Practices for Users of Controlled-Access Data. This data can be now hosted and analyzed on YCRC's NIST 800-171 compliant Hopper cluster. See the Hopper documentation for information on requesting access.

Access the Cluster

Once you have an account, the cluster can be accessed via ssh or through the Open OnDemand web portal.

Info

Connections to Milgram can only be made from the Yale VPN (access.yale.edu)--even if you are already on campus (YaleSecure or ethernet). See our VPN page for setup instructions.

Warning

Note, connections to the VPN need to be younger than 24hrs to connect to Milgram. If you are unable to connect to Milgram please try resetting your VPN connection.

System Status and Monitoring

For system status messages and the schedule for upcoming maintenance, please see the system status page. For a current node-level view of job activity, see the cluster monitor page (VPN only).

Installed Applications

A large number of software and applications are installed on our clusters. These are made available to researchers via software modules.

Available Software Modules (click to expand)

| Package | Versions |

|---|---|

| AFNI | 24.0.15,24.1.22,2023.1.07 |

| ANTs | 2.3.5 |

| ATK | 2.38.0 |

| Armadillo | 10.2.1,11.4.3 |

| Arrow | 11.0.0,14.0.1,16.1.0 |

| Autoconf | 2.69,2.71 |

| Automake | 1.16.2,1.16.5 |

| Autotools | 20200321,20220317 |

| BCFtools | 1.17 |

| BLIS | 0.9.0 |

| BWA | 0.7.17 |

| BeautifulSoup | 4.11.1 |

| Bison | 3.7.1,3.8.2,3.8.2 |

| Boost | 1.74.0,1.74.0,1.81.0 |

| Brotli | 1.0.9,1.0.9 |

| Brunsli | 0.1 |

| CFITSIO | 4.2.0 |

| CMake | 3.18.4,3.20.1,3.24.3 |

| CUDA | 11.1.1,12.0.0,12.1.1 |

| CUDAcore | 11.1.1 |

| CharLS | 2.4.2 |

| Check | 0.15.2,0.15.2 |

| DB | 18.1.40,18.1.40 |

| DBus | 1.13.18,1.15.2 |

| Doxygen | 1.8.20,1.9.5 |

| EasyBuild | 4.9.0,4.9.1,4.9.2,4.9.3 |

| Eigen | 3.3.8,3.3.9,3.4.0 |

| Emacs | 28.2 |

| FFTW | 3.3.8,3.3.8,3.3.10 |

| FFTW.MPI | 3.3.10 |

| FFmpeg | 4.3.1,5.1.2 |

| FLAC | 1.3.3,1.4.2 |

| FSL | 6.0.5.1,6.0.5.2,6.0.7.9 |

| FlexiBLAS | 3.2.1 |

| FreeSurfer | |

| FriBidi | 1.0.10,1.0.12 |

| GATK | 4.5.0.0 |

| GCC | 10.2.0,12.2.0 |

| GCCcore | 10.2.0,12.2.0 |

| GDAL | 3.2.1,3.6.2 |

| GDRCopy | 2.1,2.3,2.3.1 |

| GEOS | 3.9.1,3.11.1 |

| GLPK | 4.65,5.0 |

| GLib | 2.66.1,2.75.0 |

| GLibmm | 2.49.7 |

| GMP | 6.2.0,6.2.1 |

| GObject-Introspection | 1.66.1,1.74.0 |

| GSL | 2.6,2.7 |

| GST-plugins-bad | 1.22.5 |

| GST-plugins-base | 1.22.1 |

| GStreamer | 1.22.1 |

| GTK3 | 3.24.35 |

| GTK4 | 4.11.3 |

| GTS | 0.7.6 |

| Gdk-Pixbuf | 2.40.0,2.42.10 |

| Ghostscript | 9.53.3,10.0.0 |

| Globus-CLI | 3.30.1 |

| Go | 1.17.6,1.21.2 |

| Graphene | 1.10.8 |

| Graphviz | 2.47.0 |

| HDF | 4.2.15,4.2.15 |

| HDF5 | 1.10.7,1.10.7,1.14.0 |

| HTSlib | 1.17 |

| HarfBuzz | 2.6.7,5.3.1 |

| Highway | 1.0.3 |

| Hypre | 2.20.0 |

| ICU | 67.1,72.1 |

| IPython | 8.14.0 |

| ITK | 5.2.1 |

| ImageMagick | 7.0.10,7.1.0 |

| Imath | 3.1.6 |

| JasPer | 2.0.24,4.0.0 |

| Java | 11.0.16,17.0.4 |

| LAME | 3.100,3.100 |

| LDC | 1.24.0,1.35.0 |

| LERC | 4.0.0 |

| LLVM | 11.0.0,15.0.5 |

| LibTIFF | 4.1.0,4.4.0 |

| LittleCMS | 2.11,2.14 |

| M4 | 1.4.18,1.4.19,1.4.19 |

| MATLAB | 2022b,2023a |

| MCR | R2019b.8,R2019b.9 |

| METIS | 5.1.0 |

| MPFR | 4.1.0,4.2.0 |

| MUMPS | 5.3.5 |

| Mako | 1.1.3,1.2.4 |

| Mesa | 20.2.1,22.2.4 |

| Meson | 0.55.3,0.64.0 |

| NASM | 2.15.05,2.15.05 |

| NLopt | 2.6.2,2.7.0,2.7.1 |

| NSPR | 4.29 |

| NSS | 3.57 |

| Netpbm | 10.86.41 |

| Nextflow | 24.04.2 |

| Ninja | 1.10.1,1.11.1 |

| OpenBLAS | 0.3.12,0.3.21 |

| OpenCV | 4.8.0 |

| OpenEXR | 3.1.5 |

| OpenFace | 2.2.0 |

| OpenJPEG | 2.5.0 |

| OpenMPI | 4.0.5,4.0.5,4.1.4 |

| OpenPGM | 5.2.122 |

| OpenSSL | 1.1 |

| PCRE | 8.44,8.45 |

| PCRE2 | 10.35,10.40 |

| PETSc | 3.15.0 |

| PROJ | 7.2.1,9.1.1 |

| Pango | 1.47.0,1.50.12 |

| Perl | 5.32.0,5.32.0,5.36.0 |

| Pillow | 9.4.0 |

| PostgreSQL | 15.2 |

| PyCairo | 1.24.0 |

| PyGObject | 3.44.1 |

| PyQt5 | 5.15.7 |

| PyYAML | 6.0 |

| Python | 2.7.18,3.8.6,3.10.8,3.10.8 |

| Qhull | 2020.2 |

| Qt5 | 5.14.2 |

| Qwt | 6.1.5 |

| R | 4.2.0,4.3.2,4.4.1 |

| R-bundle-Bioconductor | 3.18,3.19 |

| R-bundle-CRAN | 2023.12,2024.06 |

| RE2 | 2023 |

| RapidJSON | 1.1.0 |

| Rust | 1.65.0 |

| SAMtools | 1.20 |

| SAS | 9.4M8,9.4 |

| SCOTCH | 6.1.0 |

| SDL2 | 2.26.3 |

| SPM | 12.5_r7771 |

| SQLite | 3.33.0,3.39.4 |

| SWIG | 4.0.2 |

| Sambamba | 1.0.1 |

| ScaLAPACK | 2.1.0,2.1.0,2.2.0 |

| SciPy-bundle | 2020.11,2020.11,2023.02 |

| Slicer | 5.6.2 |

| Spark | 3.5.1,3.5.3,3.5.4 |

| SuiteSparse | 5.8.1 |

| Szip | 2.1.1,2.1.1 |

| Tcl | 8.6.10,8.6.12 |

| Tk | 8.6.10,8.6.12 |

| Tkinter | 3.10.8 |

| UCC | 1.1.0 |

| UCX | 1.9.0,1.9.0,1.13.1 |

| UCX-CUDA | 1.13.1 |

| UDUNITS | 2.2.26,2.2.28 |

| UnZip | 6.0,6.0 |

| VTK | 8.2.0,9.0.1,9.1.0 |

| Wayland | 1.22.0 |

| X11 | 20201008,20221110 |

| XZ | 5.2.5,5.2.7 |

| Xerces-C++ | 3.2.4 |

| Xvfb | 1.20.9,21.1.6 |

| Yasm | 1.3.0,1.3.0 |

| ZeroMQ | 4.3.4 |

| aiohttp | 3.8.5 |

| annovar | 20200607 |

| ant | 1.10.12 |

| arpack-ng | 3.8.0,3.8.0 |

| arrow-R | 14.0.0.2,16.1.0 |

| at-spi2-atk | 2.38.0 |

| at-spi2-core | 2.46.0 |

| attr | 2.4.48 |

| awscli | 2.13.20 |

| binutils | 2.35,2.39,2.39 |

| bzip2 | 1.0.8,1.0.8 |

| cURL | 7.72.0,7.86.0 |

| cairo | 1.16.0,1.17.4 |

| cppy | 1.2.1 |

| cuDNN | 8.8.0.121 |

| dcm2niix | 1.0.20230411 |

| dlib | 19.22 |

| double-conversion | 3.1.5 |

| dtcmp | 1.1.2 |

| elfutils | 0.189 |

| expat | 2.2.9,2.4.9 |

| ffnvcodec | 11.1.5.2 |

| flex | 2.6.4,2.6.4 |

| fmriprep | 23.2.1 |

| fontconfig | 2.13.92,2.14.1 |

| foss | 2020b,2022b |

| fosscuda | 2020b |

| freeglut | 3.2.1,3.4.0 |

| freetype | 2.10.3,2.12.1 |

| gcccuda | 2020b |

| gettext | 0.21,0.21.1,0.21.1 |

| gfbf | 2022b |

| giflib | 5.2.1 |

| git | 2.28.0,2.38.1 |

| git-lfs | 3.2.0 |

| gompi | 2020b,2022b |

| gompic | 2020b |

| googletest | 1.12.1 |

| gperf | 3.1,3.1 |

| groff | 1.22.4,1.22.4 |

| gzip | 1.10,1.12 |

| h5py | 3.1.0 |

| help2man | 1.47.16,1.49.2 |

| hwloc | 2.2.0,2.8.0 |

| hypothesis | 5.41.2,6.68.2 |

| intltool | 0.51.0,0.51.0 |

| jbigkit | 2.1,2.1 |

| json-c | 0.16 |

| libGLU | 9.0.1,9.0.2 |

| libXp | 1.0.3 |

| libarchive | 3.4.3,3.6.1 |

| libcircle | 0.3 |

| libdeflate | 1.15 |

| libdrm | 2.4.102,2.4.114 |

| libepoxy | 1.5.10 |

| libevent | 2.1.12 |

| libfabric | 1.11.0,1.16.1 |

| libffi | 3.3,3.4.4 |

| libgd | 2.3.0,2.3.1 |

| libgeotiff | 1.6.0,1.7.1 |

| libgit2 | 1.1.0,1.5.0 |

| libglvnd | 1.3.2,1.6.0 |

| libiconv | 1.16,1.17 |

| libjpeg-turbo | 2.0.5,2.1.4 |

| libogg | 1.3.4,1.3.5 |

| libopus | 1.3.1 |

| libpciaccess | 0.16,0.17 |

| libpng | 1.2.59,1.6.37,1.6.38 |

| libreadline | 6.2,8.0,8.2 |

| libsigc++ | 2.10.8 |

| libsndfile | 1.0.28,1.2.0 |

| libsodium | 1.0.18 |

| libtirpc | 1.3.1,1.3.3 |

| libtool | 2.4.6,2.4.7 |

| libunwind | 1.4.0,1.6.2 |

| libvorbis | 1.3.7,1.3.7 |

| libwebp | 1.3.1 |

| libxml++ | 2.40.1 |

| libxml2 | 2.9.10,2.10.3 |

| libxslt | 1.1.34,1.1.37 |

| libyaml | 0.2.5 |

| lwgrp | 1.0.3 |

| lxml | 4.9.2 |

| lz4 | 1.9.2,1.9.4 |

| make | 4.4.1 |

| makeinfo | 6.7 |

| matlab-proxy | 0.14.0,0.15.1 |

| matplotlib | 3.7.0 |

| miniconda | 23.5.2,24.3.0,24.7.1 |

| mm-common | 1.0.4 |

| motif | 2.3.8 |

| mpi4py | 3.1.4 |

| mpifileutils | 0.11.1 |

| ncurses | 6.2,6.3,6.3 |

| netCDF | 4.7.4,4.9.0 |

| nettle | 3.6,3.8.1 |

| networkx | 3.0 |

| nlohmann_json | 3.11.2 |

| nodejs | 20.11.1 |

| numactl | 2.0.13,2.0.16 |

| p7zip | 17.04 |

| patchelf | 0.12,0.17.2 |

| picard | 3.0.0 |

| pigz | 2.7 |

| pixman | 0.40.0,0.42.2 |

| pkg-config | 0.29.2,0.29.2 |

| pkgconf | 1.8.0,1.9.3 |

| pkgconfig | 1.5.1 |

| printproto | 1.0.5 |

| pybind11 | 2.6.0,2.10.3 |

| re2c | 2.0.3 |

| ruamel.yaml | 0.17.21 |

| samblaster | 0.1.26 |

| scikit-build | 0.17.2 |

| snappy | 1.1.8,1.1.9 |

| tbb | 2021.10.0 |

| tcsh | 6.24.07 |

| utf8proc | 2.8.0 |

| util-linux | 2.36,2.38.1 |

| x264 | 20201026,20230226 |

| x265 | 3.3,3.5 |

| xextproto | 7.3.0 |

| xorg-macros | 1.19.2,1.19.3 |

| zlib | 1.2.11,1.2.12,1.2.12 |

| zstd | 1.4.5,1.5.2 |

Partitions and Hardware

Milgram is made up of several kinds of compute nodes. We group them into (sometimes overlapping) Slurm partitions meant to serve different purposes. By combining the --partition and --constraint Slurm options you can more finely control what nodes your jobs can run on.

Job Submission Limits

-

You are limited to 4 interactive app instances (of any type) at one time. Additional instances will be rejected until you delete older open instances. For OnDemand jobs, closing the window does not terminate the interactive app job. To terminate the job, click the "Delete" button in your "My Interactive Apps" page in the web portal.

-

Job submissions are limited to 200 jobs per hour. See the Rate Limits section in the Common Job Failures page for more info.

Interactive Partition Name Change

The 'interactive' and 'psych_interactive partitions have been renamed to 'devel' and 'psych_devel', respectively. Please adjust your job submissions accordingly.

Public Partitions

See each tab below for more information about the available common use partitions.

Use the day partition for most batch jobs. This is the default if you don't specify one with --partition.

Request Defaults

Unless specified, your jobs will run with the following options to salloc and sbatch options for this partition.

--time=01:00:00 --nodes=1 --ntasks=1 --cpus-per-task=1 --mem-per-cpu=5120

Job Limits

Jobs submitted to the day partition are subject to the following limits:

| Limit | Value |

|---|---|

| Maximum job time limit | 1-00:00:00 |

| Maximum CPUs per user | 216 |

| Maximum memory per user | 1080G |

Available Compute Nodes

Requests for --cpus-per-task and --mem can't exceed what is available on a single compute node.

| Count | CPU Type | CPUs/Node | Memory/Node (GiB) | Node Features |

|---|---|---|---|---|

| 13 | 6240 | 36 | 181 | cascadelake, avx512, 6240, nogpu, standard, common, bigtmp, oldest |

Use the devel partition to jobs with which you need ongoing interaction. For example, exploratory analyses or debugging compilation.

Request Defaults

Unless specified, your jobs will run with the following options to salloc and sbatch options for this partition.

--time=01:00:00 --nodes=1 --ntasks=1 --cpus-per-task=1 --mem-per-cpu=5120

Job Limits

Jobs submitted to the devel partition are subject to the following limits:

| Limit | Value |

|---|---|

| Maximum job time limit | 06:00:00 |

| Maximum CPUs per user | 4 |

| Maximum memory per user | 32G |

| Maximum running jobs per user | 1 |

| Maximum submitted jobs per user | 1 |

Available Compute Nodes

Requests for --cpus-per-task and --mem can't exceed what is available on a single compute node.

| Count | CPU Type | CPUs/Node | Memory/Node (GiB) | Node Features |

|---|---|---|---|---|

| 2 | 6240 | 36 | 181 | cascadelake, avx512, 6240, nogpu, standard, common, bigtmp, oldest |

Use the week partition for jobs that need a longer runtime than day allows.

Request Defaults

Unless specified, your jobs will run with the following options to salloc and sbatch options for this partition.

--time=01:00:00 --nodes=1 --ntasks=1 --cpus-per-task=1 --mem-per-cpu=5120

Job Limits

Jobs submitted to the week partition are subject to the following limits:

| Limit | Value |

|---|---|

| Maximum job time limit | 7-00:00:00 |

| Maximum CPUs per user | 72 |

Available Compute Nodes

Requests for --cpus-per-task and --mem can't exceed what is available on a single compute node.

| Count | CPU Type | CPUs/Node | Memory/Node (GiB) | Node Features |

|---|---|---|---|---|

| 4 | 6240 | 36 | 181 | cascadelake, avx512, 6240, nogpu, standard, common, bigtmp, oldest |

Use the gpu partition for jobs that make use of GPUs. You must request GPUs explicitly with the --gpus option in order to use them. For example, --gpus=a5000:2 would request 2 NVIDIA RTX A5000 GPUs per node.

Request Defaults

Unless specified, your jobs will run with the following options to salloc and sbatch options for this partition.

--time=01:00:00 --nodes=1 --ntasks=1 --cpus-per-task=1 --mem-per-cpu=5120

GPU jobs need GPUs!

Jobs submitted to this partition do not request a GPU by default. You must request one with the --gpus option.

Job Limits

Jobs submitted to the gpu partition are subject to the following limits:

| Limit | Value |

|---|---|

| Maximum job time limit | 2-00:00:00 |

| Maximum GPUs per user | 8 |

Available Compute Nodes

Requests for --cpus-per-task and --mem can't exceed what is available on a single compute node.

| Count | CPU Type | CPUs/Node | Memory/Node (GiB) | GPU Type | GPUs/Node | vRAM/GPU (GB) | Node Features |

|---|---|---|---|---|---|---|---|

| 3 | 6542 | 48 | 975 | h100 | 4 | 80 | emeraldrapids, avx512, 6542Y, doubleprecision, common, gpu, h100 |

| 1 | 6326 | 32 | 497 | a40 | 4 | 48 | icelake, avx512, pi, 6326, singleprecision, bigtmp, a40 |

Use the scavenge partition to run preemptable jobs on more resources than normally allowed. For more information about scavenge, see the Scavenge documentation.

Request Defaults

Unless specified, your jobs will run with the following options to salloc and sbatch options for this partition.

--time=01:00:00 --nodes=1 --ntasks=1 --cpus-per-task=1 --mem-per-cpu=5120

GPU jobs need GPUs!

Jobs submitted to this partition do not request a GPU by default. You must request one with the --gpus option.

Job Limits

Jobs submitted to the scavenge partition are subject to the following limits:

| Limit | Value |

|---|---|

| Maximum job time limit | 1-00:00:00 |

Available Compute Nodes

Requests for --cpus-per-task and --mem can't exceed what is available on a single compute node.

| Count | CPU Type | CPUs/Node | Memory/Node (GiB) | GPU Type | GPUs/Node | vRAM/GPU (GB) | Node Features |

|---|---|---|---|---|---|---|---|

| 3 | 6542 | 48 | 975 | h100 | 4 | 80 | emeraldrapids, avx512, 6542Y, doubleprecision, common, gpu, h100 |

| 20 | 6342 | 48 | 478 | icelake, avx512, 6342, bigtmp, nogpu, standard, pi | |||

| 1 | 6326 | 32 | 497 | a40 | 4 | 48 | icelake, avx512, pi, 6326, singleprecision, bigtmp, a40 |

| 17 | 6240 | 36 | 181 | cascadelake, avx512, 6240, nogpu, standard, common, bigtmp, oldest | |||

| 10 | 6240 | 36 | 372 | rtx2080ti | 4 | 11 | cascadelake, avx512, 6240, singleprecision, pi, bigtmp, rtx2080ti, oldest |

Private Partitions

With few exceptions, jobs submitted to private partitions are not considered when calculating your group's Fairshare. Your group can purchase additional hardware for private use, which we will make available as a pi_groupname partition. These nodes are purchased by you, but supported and administered by us. After vendor support expires, we retire compute nodes. Compute nodes can range from $10K to upwards of $50K depending on your requirements. If you are interested in purchasing nodes for your group, please contact us.

PI Partitions (click to expand)

Request Defaults

Unless specified, your jobs will run with the following options to salloc and sbatch options for this partition.

--time=01:00:00 --nodes=1 --ntasks=1 --cpus-per-task=1 --mem-per-cpu=5120

Job Limits

Jobs submitted to the psych_day partition are subject to the following limits:

| Limit | Value |

|---|---|

| Maximum job time limit | 1-00:00:00 |

| Maximum CPUs per group | 500 |

| Maximum memory per group | 2500G |

| Maximum CPUs per user | 350 |

| Maximum memory per user | 1750G |

Available Compute Nodes

Requests for --cpus-per-task and --mem can't exceed what is available on a single compute node.

| Count | CPU Type | CPUs/Node | Memory/Node (GiB) | Node Features |

|---|---|---|---|---|

| 19 | 6342 | 48 | 478 | icelake, avx512, 6342, bigtmp, nogpu, standard, pi |

Request Defaults

Unless specified, your jobs will run with the following options to salloc and sbatch options for this partition.

--time=01:00:00 --nodes=1 --ntasks=1 --cpus-per-task=1 --mem-per-cpu=5120

Job Limits

Jobs submitted to the psych_devel partition are subject to the following limits:

| Limit | Value |

|---|---|

| Maximum job time limit | 06:00:00 |

| Maximum CPUs per user | 4 |

| Maximum memory per user | 32G |

| Maximum running jobs per user | 1 |

| Maximum submitted jobs per user | 1 |

Available Compute Nodes

Requests for --cpus-per-task and --mem can't exceed what is available on a single compute node.

| Count | CPU Type | CPUs/Node | Memory/Node (GiB) | Node Features |

|---|---|---|---|---|

| 1 | 6342 | 48 | 478 | icelake, avx512, 6342, bigtmp, nogpu, standard, pi |

Request Defaults

Unless specified, your jobs will run with the following options to salloc and sbatch options for this partition.

--time=01:00:00 --nodes=1 --ntasks=1 --cpus-per-task=1 --mem-per-cpu=5120

GPU jobs need GPUs!

Jobs submitted to this partition do not request a GPU by default. You must request one with the --gpus option.

Job Limits

Jobs submitted to the psych_gpu partition are subject to the following limits:

| Limit | Value |

|---|---|

| Maximum job time limit | 7-00:00:00 |

| Maximum GPUs per user | 20 |

Available Compute Nodes

Requests for --cpus-per-task and --mem can't exceed what is available on a single compute node.

| Count | CPU Type | CPUs/Node | Memory/Node (GiB) | GPU Type | GPUs/Node | vRAM/GPU (GB) | Node Features |

|---|---|---|---|---|---|---|---|

| 10 | 6240 | 36 | 372 | rtx2080ti | 4 | 11 | cascadelake, avx512, 6240, singleprecision, pi, bigtmp, rtx2080ti, oldest |

Request Defaults

Unless specified, your jobs will run with the following options to salloc and sbatch options for this partition.

--time=01:00:00 --nodes=1 --ntasks=1 --cpus-per-task=1 --mem-per-cpu=5120

GPU jobs need GPUs!

Jobs submitted to this partition do not request a GPU by default. You must request one with the --gpus option.

Job Limits

Jobs submitted to the psych_scavenge partition are subject to the following limits:

| Limit | Value |

|---|---|

| Maximum job time limit | 7-00:00:00 |

Available Compute Nodes

Requests for --cpus-per-task and --mem can't exceed what is available on a single compute node.

| Count | CPU Type | CPUs/Node | Memory/Node (GiB) | GPU Type | GPUs/Node | vRAM/GPU (GB) | Node Features |

|---|---|---|---|---|---|---|---|

| 20 | 6342 | 48 | 478 | icelake, avx512, 6342, bigtmp, nogpu, standard, pi | |||

| 10 | 6240 | 36 | 372 | rtx2080ti | 4 | 11 | cascadelake, avx512, 6240, singleprecision, pi, bigtmp, rtx2080ti, oldest |

Request Defaults

Unless specified, your jobs will run with the following options to salloc and sbatch options for this partition.

--time=01:00:00 --nodes=1 --ntasks=1 --cpus-per-task=1 --mem-per-cpu=5120

Job Limits

Jobs submitted to the psych_week partition are subject to the following limits:

| Limit | Value |

|---|---|

| Maximum job time limit | 7-00:00:00 |

| Maximum CPUs per group | 350 |

| Maximum memory per group | 2000G |

| Maximum CPUs per user | 250 |

| Maximum memory per user | 1500G |

| Maximum CPUs in use | 448 |

Available Compute Nodes

Requests for --cpus-per-task and --mem can't exceed what is available on a single compute node.

| Count | CPU Type | CPUs/Node | Memory/Node (GiB) | Node Features |

|---|---|---|---|---|

| 20 | 6342 | 48 | 478 | icelake, avx512, 6342, bigtmp, nogpu, standard, pi |

Storage

/gpfs/milgram is Milgram's primary filesystem where home, project, and scratch60 directories are located. For more details on the different storage spaces, see our Cluster Storage documentation.

You can check your current storage usage & limits by running the getquota command. Note that the per-user usage breakdown only update once daily.

For information on data recovery, see the Backups and Snapshots documentation.

Warning

Files stored in scratch60 are purged if they are older than 60 days. You will receive an email alert one week before they are deleted. Artificial extension of scratch file expiration is forbidden without explicit approval from the YCRC. Please purchase storage if you need additional longer term storage.

| Partition | Root Directory | Storage | File Count | Backups | Snapshots |

|---|---|---|---|---|---|

| home | /gpfs/milgram/home |

125GiB/user | 500,000 | Yes | >=2 days |

| project | /gpfs/milgram/project |

1TiB/group, increase to 4TiB on request. Psych PIs: 10TiB/group; increase with Nick/Kia |

5,000,000 | Yes | >=2 days |

| scratch60 | /gpfs/milgram/scratch60 |

20TiB/group | 15,000,000 | No | No |